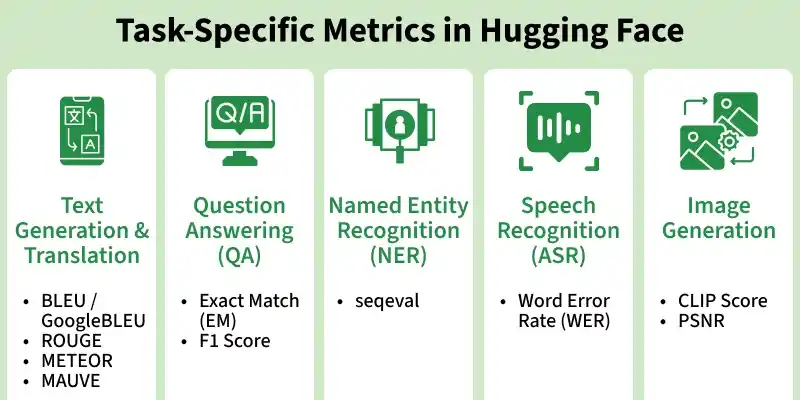

Task-specific metrics evaluate models based on their objective such as text generation, question answering or speech recognition. Hugging Face provides these through the evaluate library for meaningful and context aware evaluation.

- Evaluate models based on the task they perform.

- Provides more accurate insights than generic metrics.

- Available through the Hugging Face evaluate library.

- Enables better and context-aware model evaluation.

Common Task-Specific Metrics

1. Text Generation and Translation

Text generation and translation tasks focus on producing human like text or converting text between languages. Evaluation is done using metrics that compare generated text with reference text.

- BLEU / GoogleBLEU: Measures word-level overlap between generated and reference text.

- ROUGE: Measures n-gram overlap, mainly used for summarization tasks.

!pip install rouge_score

!pip install evaluate

from transformers import pipeline

import evaluate

generator = pipeline("text-generation", model="gpt2")

output = generator("Machine learning is", max_length=20)[0]["generated_text"]

bleu = evaluate.load("bleu")

rouge = evaluate.load("rouge")

print("BLEU:", bleu.compute(predictions=[output], references=[["Machine learning is a field of AI"]]))

print("ROUGE:", rouge.compute(predictions=[output], references=["Machine learning is a field of AI"]))

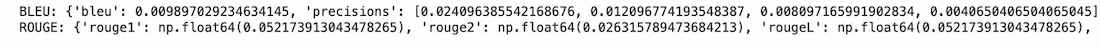

Output:

2. Question Answering (QA)

QA models extract answers from a given context. Evaluation checks how closely the predicted answer matches the actual answer.

- Exact Match (EM): Checks if prediction exactly matches the correct answer.

- F1 Score: Measures partial overlap between predicted and actual answers.

from transformers import pipeline

import evaluate

qa = pipeline("question-answering")

context = "AI is artificial intelligence."

question = "What is AI?"

result = qa(question=question, context=context)

squad_metric = evaluate.load("squad")

predictions = [{'prediction_text': result["answer"], 'id': '1'}]

references = [{'answers': {'answer_start': [6], 'text': ["artificial intelligence"]}, 'id': '1'}]

results = squad_metric.compute(predictions=predictions, references=references)

print(f"Prediction: {result['answer']}")

print(f"F1 Score: {results['f1']}")

print(f"Exact Match: {results['exact_match']}")

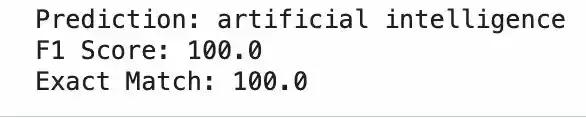

Output:

3. Named Entity Recognition (NER)

NER identifies entities like names and organizations in text. Evaluation focuses on correct labeling of sequences.

- seqeval (Precision and F1): Evaluates predicted entity tags against true labels.

!pip install seqeval

from transformers import pipeline

import evaluate

ner = pipeline("ner", aggregation_strategy="simple")

preds = ner("Elon Musk founded SpaceX")

predictions = [["B-PER", "I-PER", "O", "B-ORG"]]

references = [["B-PER", "I-PER", "O", "B-ORG"]]

metric = evaluate.load("seqeval")

results = metric.compute(predictions=predictions, references=references)

print(preds)

print("Precision:", results["overall_precision"])

print("Recall:", results["overall_recall"])

print("F1 Score:", results["overall_f1"])

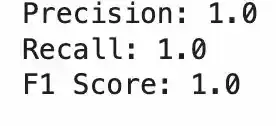

Output:

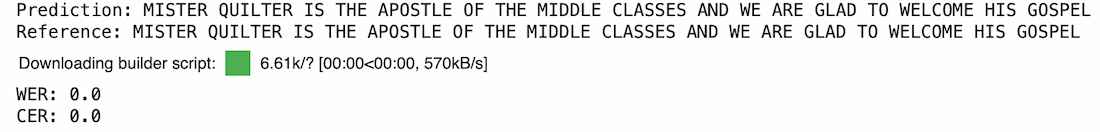

4. Speech Recognition (ASR)

Speech recognition converts audio into text. Evaluation measures transcription accuracy.

- Word Error Rate (WER): Measures errors in predicted text.

- Character Error Rate (CER): Measures character-level errors.

!pip install -q transformers datasets evaluate librosa soundfile

!pip install jiwer

from transformers import pipeline

from datasets import load_dataset

import evaluate

asr = pipeline(

"automatic-speech-recognition",

model="facebook/wav2vec2-base-960h"

)

dataset = load_dataset("hf-internal-testing/librispeech_asr_dummy", "clean", split="validation")

audio = dataset[0]["audio"]

reference = dataset[0]["text"]

pred = asr(audio)["text"]

print("Prediction:", pred)

print("Reference:", reference)

wer = evaluate.load("wer")

cer = evaluate.load("cer")

print("WER:", wer.compute(predictions=[pred], references=[reference]))

print("CER:", cer.compute(predictions=[pred], references=[reference]))

Output:

5. Image Generation

Image generation models create images from text prompts. Evaluation checks structural similarity and pixel-level quality.

- SSIM: Measures structural similarity between the generated image and a reference across brightness, contrast and edges. Score of 1.0 means identical; above 0.9 indicates very high similarity.

- PSNR: Measures pixel level fidelity by calculating how much noise exists relative to the original signal, expressed in decibels (dB). Above 30 dB indicates good quality.

!pip install diffusers transformers accelerate evaluate pillow torchvision

!pip install scikit-image pillow -q

from diffusers import StableDiffusionPipeline

import torch

from skimage.metrics import structural_similarity as ssim

from skimage.metrics import peak_signal_noise_ratio as psnr

from PIL import Image

import numpy as np

pipe = StableDiffusionPipeline.from_pretrained(

"runwayml/stable-diffusion-v1-5",

torch_dtype=torch.float16

).to("cuda" if torch.cuda.is_available() else "cpu")

prompt = "A cat sitting on a chair"

image = pipe(prompt).images[0]

image.save("generated.png")

img = np.array(Image.open("generated.png").convert("RGB"))

noisy = np.clip(img + np.random.normal(0, 10, img.shape), 0, 255).astype(np.uint8)

ssim_score = ssim(img, noisy, channel_axis=2, data_range=255)

psnr_score = psnr(img, noisy, data_range=255)

print(f"SSIM : {ssim_score:.4f} (1.0 = identical, > 0.9 = very similar)")

print(f"PSNR : {psnr_score:.2f} dB (> 30 dB = good quality)")

Output:

Download full code from here

Advantages

- Metrics align closely with the specific task, making evaluation more relevant and meaningful.

- Provide a standardized way to compare model performance consistently.

- Easy to integrate into existing machine learning pipelines.

- Can be applied across domains like text, speech and vision.

Limitations

- Often rely on surface level comparisons and may miss deeper meaning.

- Effectiveness can vary depending on the data distribution.

- Some tasks still require human evaluation for accurate assessment.

- Provide approximate results rather than perfect measures of performance.